Pinecone

Learn how to build scalable, AI-driven applications using Pinecone, the leading vector database for high-performance semantic search.Preview Pinecone course

Price Match Guarantee Full Lifetime Access Access on any Device Technical Support Secure Checkout Course Completion Certificate 92% Started a new career

BUY THIS COURSE (

92% Started a new career

BUY THIS COURSE (GBP 10 GBP 29 )-

83% Got a pay increase and promotion

83% Got a pay increase and promotion

Students also bought -

-

- TigerGraph

- 10 Hours

- GBP 10

- 10 Learners

-

- Amazon Neptune

- 10 Hours

- GBP 10

- 10 Learners

-

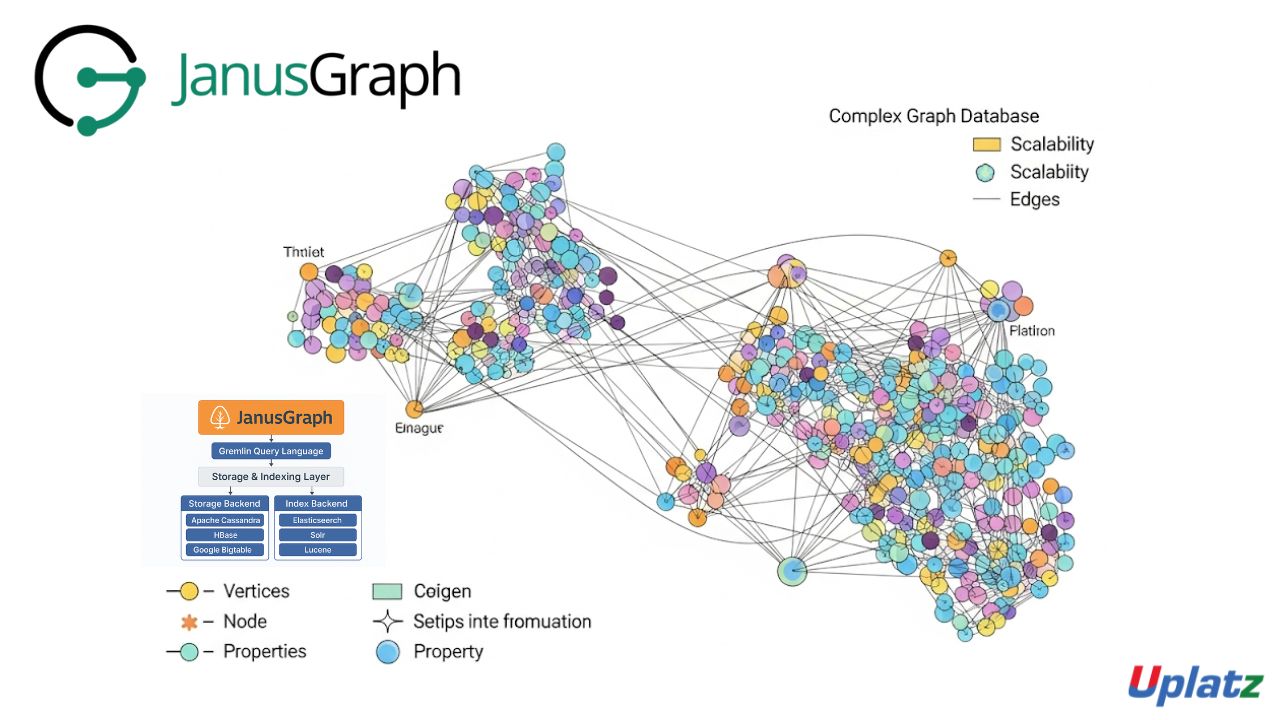

- JanusGraph

- 10 Hours

- GBP 10

- 10 Learners

In today’s AI-driven era, the ability to search, understand, and retrieve information based on semantic meaning—rather than exact keywords—has become a critical differentiator for intelligent applications. From recommendation engines and chatbots to personalized search systems and document retrieval tools, the demand for fast, scalable, and context-aware search capabilities is skyrocketing.

Pinecone has emerged as the industry-leading vector database purpose-built for this new generation of AI applications. It empowers developers to store, index, and query high-dimensional vector embeddings with millisecond latency, enabling semantic search and retrieval capabilities at scale.

This comprehensive, hands-on course — Pinecone: Master Vector Database and AI-Powered Semantic Search — equips you with both the theoretical foundations and practical implementation skills to build AI-powered search and recommendation systems using Pinecone. You’ll learn to design and deploy high-performance pipelines that understand meaning, not just keywords — transforming how users interact with data.

🔍 Why Vector Databases Matter

Traditional relational databases and keyword-based search engines are not designed to handle the semantic complexity required by modern AI systems. For example, when a user searches for “doctor” but means “physician,” or when you want to recommend products based on user behavior instead of matching metadata, conventional systems fail.

Vector databases solve this challenge by managing and indexing embeddings — numerical representations of data such as text, images, or audio — in high-dimensional space. These embeddings are generated using AI models like OpenAI Embeddings, Hugging Face Transformers, or CLIP for visual data.

By transforming unstructured data into mathematical vectors, a vector database enables approximate nearest neighbor (ANN) searches to find items that are semantically similar, not just textually identical. This is where Pinecone excels — offering the tools to store, organize, and query vectors efficiently and at scale, delivering lightning-fast semantic search for real-world applications.

⚙️ Why Choose Pinecone

Unlike general-purpose NoSQL databases that bolt on vector functionality, Pinecone is a vector-native database built specifically for semantic search and retrieval. It provides a fully managed, cloud-native platform that abstracts away infrastructure management so developers can focus purely on building intelligent applications.

Key advantages of Pinecone include:

-

Fully managed cloud service: No setup, tuning, or server management required.

-

Horizontal scalability: Supports billions of vectors and high query throughput.

-

Low latency: Millisecond search results even with massive datasets.

-

Rich metadata filtering: Combine contextual filtering with semantic similarity.

-

Namespace management: Isolate data for multi-tenant or modular systems.

-

Multiple similarity metrics: Choose from cosine, dot-product, or Euclidean distance.

-

High reliability and replication: Built-in fault tolerance for production systems.

Pinecone stands out for its speed, accuracy, and developer experience, making it the go-to vector platform for building semantic search and retrieval systems that power today’s AI products.

🧠 What You’ll Learn

This course takes you on a complete journey — from understanding vector search theory to deploying advanced AI-driven applications in production. Through guided lessons and hands-on exercises, you’ll explore how to connect machine learning models with Pinecone’s vector infrastructure to build semantic search, recommendation, and retrieval-augmented generation (RAG) systems.

By the end of this course, you will:

-

Understand what vector databases are and how they differ from traditional databases.

-

Generate and normalize embeddings using APIs like OpenAI, Hugging Face, or CLIP.

-

Select the right similarity metric — cosine, dot-product, or Euclidean distance.

-

Create, manage, and query vector indexes within Pinecone.

-

Attach and query metadata for contextual search results.

-

Use namespaces to organize data across multiple projects or users.

-

Combine keyword-based ranking (e.g., BM25) with semantic search for hybrid models.

-

Integrate Pinecone with LLM frameworks like LangChain and LlamaIndex.

-

Build real-world RAG pipelines for contextual question answering.

-

Optimize for speed, cost, and scalability using Pinecone’s pod-based infrastructure.

You’ll work with real datasets — text documents, images, and structured content — to create end-to-end AI applications that retrieve, rank, and recommend intelligently.

🏗️ Real-World Projects You’ll Build

Throughout the course, you’ll apply your learning to practical, production-oriented projects, including:

-

Semantic Search Engine: Build a document search tool that retrieves relevant results based on meaning, not keywords.

-

AI Recommendation System: Create personalized content or product recommendations using similarity-based retrieval.

-

Question-Answering Assistant (RAG): Power an LLM with Pinecone to fetch context-aware responses in real time.

-

Hybrid Search Platform: Combine lexical and semantic search for improved accuracy and diversity of results.

These projects provide hands-on experience that reinforces both conceptual understanding and implementation proficiency.

🌟 Why Learn Pinecone

The future of AI depends on vector-based understanding. Mastering Pinecone gives you a critical edge in one of the most important areas of modern AI engineering.

Here’s why Pinecone is a must-learn technology:

-

It’s trusted by major companies for personalized search and recommendations.

-

It integrates seamlessly with tools like OpenAI, Cohere, Hugging Face, and Google Vertex AI.

-

It powers retrieval-augmented generation (RAG) pipelines that make LLMs more factual and context-aware.

-

It enables developers to deploy AI-powered search capabilities within hours instead of weeks.

-

It represents a key building block in AI-native data infrastructure.

By learning Pinecone, you position yourself at the forefront of AI-driven data retrieval — a rapidly growing skill area across industries from e-commerce and finance to healthtech and enterprise software.

👩💻 Who Should Take This Course

This course is designed for professionals and learners who want to bridge the gap between AI models and real-world applications through semantic search:

-

Machine Learning Engineers building intelligent retrieval or recommendation systems.

-

Software Developers developing AI-enabled products, chatbots, or data platforms.

-

Data Scientists & NLP Practitioners handling unstructured text, image, or audio data.

-

AI Architects & Researchers designing scalable, context-aware systems.

-

Startup Teams & Product Managers building AI-first applications focused on personalization and discovery.

No prior experience with Pinecone is required — you’ll start from the fundamentals and progress to advanced implementations through structured lessons and guided projects.

🧩 Course Format and Learning Approach

The course combines conceptual learning, guided tutorials, and hands-on exercises to ensure deep, practical understanding.

Each module includes:

-

HD video tutorials with step-by-step implementation.

-

Downloadable code samples using Python SDK and Jupyter notebooks.

-

Interactive assignments and practice projects.

-

Integration walkthroughs with OpenAI, LangChain, and LlamaIndex.

-

Best practices for scaling, indexing, and optimizing Pinecone clusters.

You’ll have lifetime access to all materials, ensuring you stay up to date as Pinecone and AI search technologies evolve.

🚀 Build the Future of AI-Powered Search

By the end of this course, you’ll not only understand how vector databases work but will also be able to design and deploy semantic search systems ready for real-world production use.

You’ll graduate with the ability to:

-

Build scalable, real-time search and recommendation engines.

-

Connect LLMs to external data sources via RAG pipelines.

-

Combine symbolic and semantic approaches for optimal performance.

-

Integrate Pinecone seamlessly into modern AI ecosystems.

Whether you’re building enterprise knowledge assistants, contextual chatbots, or personalized discovery platforms, Pinecone mastery is your key to the future of intelligent search.

Start learning Pinecone today — and take the first step toward mastering the infrastructure that powers the next generation of AI-driven, semantic applications.

Course/Topic 1 - Coming Soon

-

The videos for this course are being recorded freshly and should be available in a few days. Please contact info@uplatz.com to know the exact date of the release of this course.

- Understand what vector databases are and why they are crucial in modern AI applications.

- Use the Pinecone SDK to create, manage, and scale vector indexes.

- Transform data into vector embeddings using tools like OpenAI, Hugging Face, or custom encoders.

- Perform semantic search with Pinecone using cosine or dot-product similarity.

- Apply metadata filtering and namespaces for contextual search.

- Implement hybrid search by combining keyword and vector relevance.

- Deploy Pinecone in RAG pipelines with LLMs like GPT-4 and LangChain.

- Optimize vector indexing for performance and relevance.

- Integrate Pinecone into AI workflows such as search engines, chatbots, and product recommendations.

- What is vector search?

- Embeddings and similarity metrics

- Use cases and Pinecone overview

- Pinecone architecture and API access

- Free-tier vs. enterprise features

- Python SDK installation and setup

- Generating embeddings using OpenAI, Hugging Face

- Dimensionality and vector normalization

- Understanding cosine vs dot-product similarity

- Creating a Pinecone index

- Inserting, updating, and deleting vectors

- Querying for nearest neighbors

- Attaching metadata to vectors

- Using filters for precise results

- Organizing data by namespaces

- Combining vector and keyword search

- Using BM25 with semantic vectors

- Re-ranking with external models

- Index types: pod-based scaling

- Performance tuning and cost control

- Index size and throughput best practices

- Retrieval-Augmented Generation (RAG)

- LangChain and Pinecone workflows

- LLM memory and context injection

-

Semantic document search

-

Personalized product recommendations

-

AI assistant with memory using Pinecone

- Vector Search Engineer

- Semantic Search Developer

- AI Architect

- NLP/LLM Engineer

- Machine Learning Specialist

Pinecone is a fully managed vector database that supports high-speed similarity search across large-scale vector embeddings.

Vector embeddings are numerical representations of data (text, images, etc.) used to perform similarity searches. Pinecone indexes and queries these embeddings efficiently.

Traditional databases are optimized for structured queries; Pinecone is designed for approximate nearest neighbor (ANN) search in high-dimensional vector space.

Pinecone supports cosine similarity, dot-product, and Euclidean distance.

Metadata filtering allows users to constrain search results based on tags or properties assigned to vectors.

Pinecone scales automatically with pod-based infrastructure, enabling horizontal scaling for billions of vectors.

A namespace is a logical partition within an index to separate different sets of data for isolation or organization.

Yes, Pinecone is widely used in RAG pipelines where LLMs retrieve relevant vector-matched content from Pinecone to generate responses.

Hybrid search combines keyword relevance (e.g., BM25) with vector similarity to improve accuracy and ranking.

Using the Pinecone Python SDK, you can initialize an index, insert vectors, query them, and manage metadata using simple API calls.